- Blog

- Kryptonite bike lock

- Collective goods

- Download tavern master

- Rayman raving rabbids tv party ending

- Gray newel rainy daze

- Skype for mac free video calls

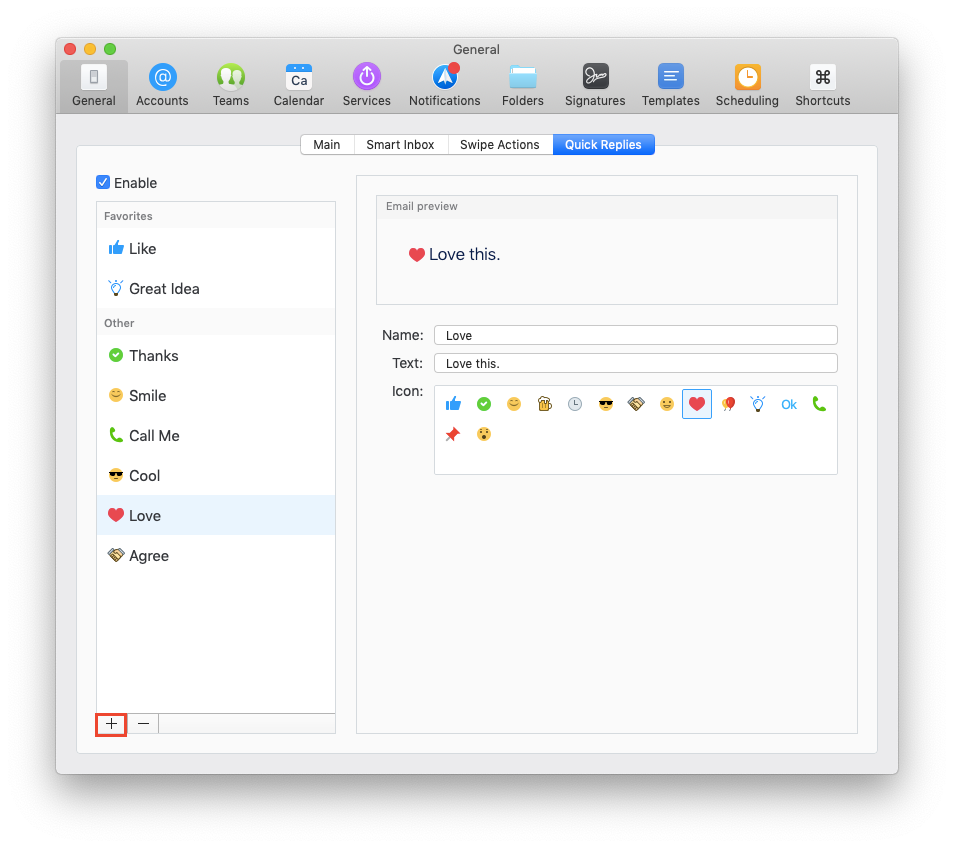

- Spark for mac troubleshoot

- Thomas battman

- Noiseware public

- The unarchiver rar mac os x 10-6-8

- Download latest andreamosaic

- Gpx editor will not save gpx file

- Really stupid game

- Stykz models

- Clicker heroes save editor

#SPARK FOR MAC TROUBLESHOOT CODE#

Subsequent chapters introduce cluster computing and the concepts and techniques that you’ll need to successfully run code across multiple machines.įrom R, getting started with Spark using sparklyr and a local cluster is as easy as installing and loading the sparklyr package followed by installing Spark using sparklyr however, we assume you are starting with a brand new computer running Windows, macOS, or Linux, so we’ll walk you through the prerequisites before connecting to a local Spark cluster.Īlthough this chapter is designed to help you get ready to use Spark on your personal computer, it’s also likely that some readers will already have a Spark cluster available or might prefer to get started with an online Spark cluster. In Chapter 3 we dive into analysis followed by modeling, which presents examples using a single-cluster machine: your personal computer.

In other words, you will need to do some wax-on, wax-off, repeat before you get fully immersed in the world of Spark. We encourage you to walk through the code in this chapter because it will force you to go through the motions of analyzing, modeling, reading, and writing data. In this chapter, we take a tour of the tools you’ll need to become proficient in Spark. We also hope you are excited to become proficient in large-scale computing. If you are newer to R, it should also be clear that combining Spark with data science tools like ggplot2 for visualization and dplyr to perform data transformations brings a promising landscape for doing data science at scale.

And it should be clear that Spark solves problems by making use of multiple computers when data does not fit in a single machine or when computation is too slow. 14.6.1 Google trends for mainframes, cloud computing and kubernetesĪfter reading Chapter 1, you should now be familiar with the kinds of problems that Spark can help you solve.14.2.2 Daily downloads of CRAN packages.